There’s a quiet threat sitting in most public communication campaigns – and it’s not a lack of budget, creative talent, or strategy. It’s something far more invisible: assumptions.

In our latest webinar, Punchy Studio founder Anthony Lam and communications strategist Rachel Abad explored how untested assumptions are quietly draining public sector budgets and killing campaigns before they ever reach their audiences.

This blog captures the key insights from our session. For the full conversation including live examples, case studies, and campaign videos, watch the recording here.

Why are we having this conversation?

We’re operating in a world with more content than ever. More campaigns, more social media, more video, more messaging. And with all of that comes a significant price tag.

But at the same time, there is a much lower tolerance for waste. Communications teams are no longer just being asked “did people see it?” – they’re being asked “did it actually change behaviour?”

Yet many communications decisions are still being driven by internal assumptions. Teams choose formats they’re comfortable with and craft messages around what the organisation wants to say, instead of what the audience needs to hear.

A recent Qualtrics study found that nearly two thirds of senior marketers still rely on intuition for important decisions. If assumptions haven’t been tested before that point, you’re building something expensive on an untested foundation.

What are these audience assumptions?

Audience assumptions are what we think or guess we know about the people we’re communicating with. They show up in areas like:

- Format – What style of content will resonate?

- Tone – Does our voice feel right for this audience?

- Message – Are we saying what people actually need to hear?

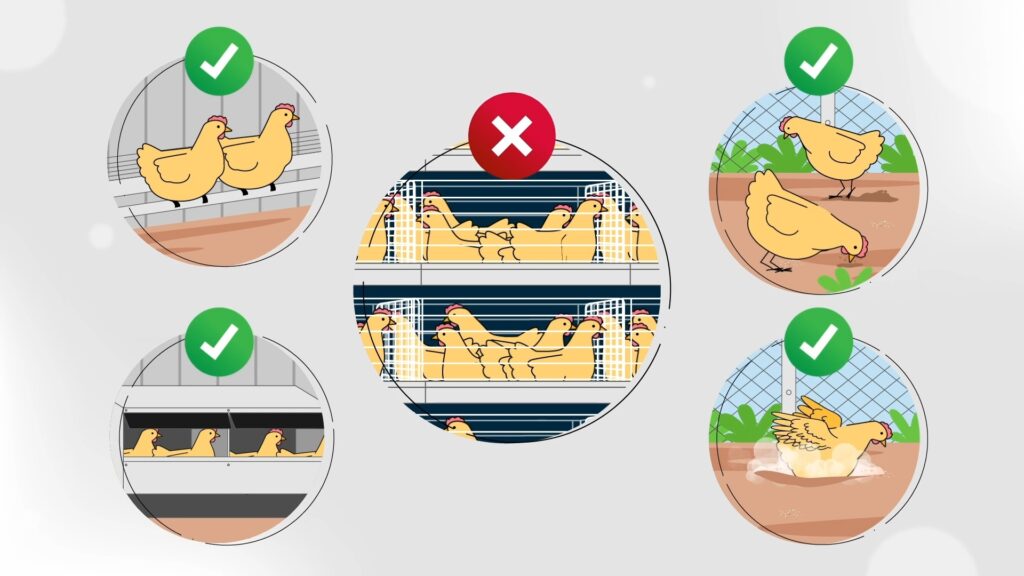

- Cultural nuance – Are we representing the right environments, food, language?

- Comprehension – Can our audience understand what we’re saying?

- Distribution – Are we putting the content where people will actually find it?

- Pace – Are we cramming ten key points into thirty seconds?

If any of these are wrong, they can quietly undermine the effectiveness of an entire campaign.

A campaign isn’t a failure because someone trolls your post, or because the creative isn’t everyone’s favourite. It’s a failure when nothing shifts. When people don’t sign up, don’t comply, don’t enquire, don’t stop, don’t start.

That’s the real outcome we need to be designing for. And to do that, we need to actually understand what drives people.

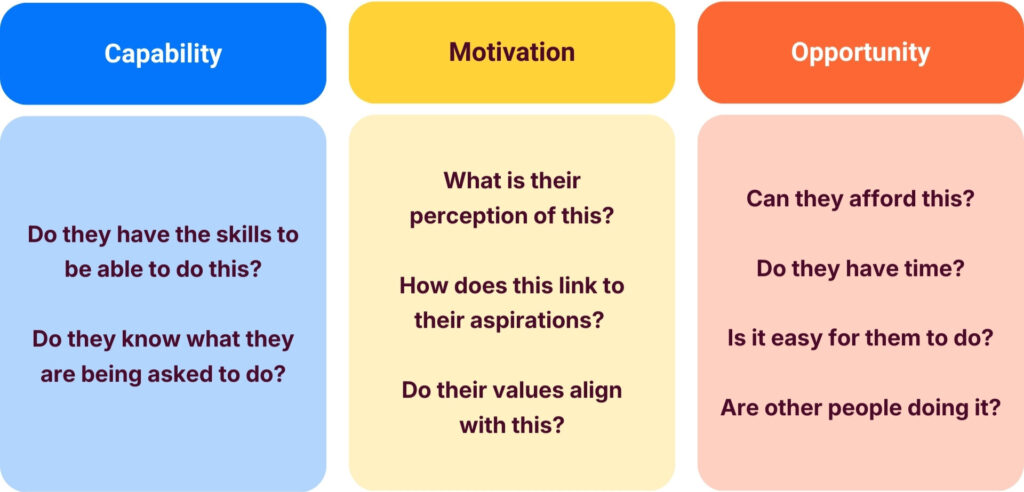

The COM-B model: understanding what drives behaviour

One of the most practical frameworks for diagnosing behaviour is the COM-B model, a behaviour change framework commonly used in public health and policy settings.

At its core, COM-B says that behaviour only happens when three things are present:

All three need to be present. If any one is missing, behaviour won’t occur.

When we use the COM-B lens during concept testing, we start to see our assumptions more clearly:

- Are we assuming people understand what to do, when actually capability is low?

- Are we assuming they’re motivated, when actually there’s stigma, fear, or apathy?

- Are we assuming they have access, when opportunity is the real barrier?

COM-B helps us diagnose these gaps before we start, instead of after the campaign has launched and the money has been spent. Once you can diagnose it, you can strategise and design for it.

The concept testing framework

Once we’ve examined behaviour through the COM-B lens, something interesting happens: we’re forced to admit what we don’t know. And that’s actually an empowering place to be, because it gives us the questions we need to go and get real answers.

This is where concept testing comes in.

Concept testing doesn’t have to mean a massive, months-long research project. At its core, it simply means talking to the people you’re trying to reach before you launch. That might look like a small group conversation with five or six people from your audience, a quick concept check with a community organisation you already work with, or showing a storyboard and script to a handful of people and asking a few simple questions.

A few hours of testing upfront is almost always cheaper than fixing a campaign that missed the mark after launch.

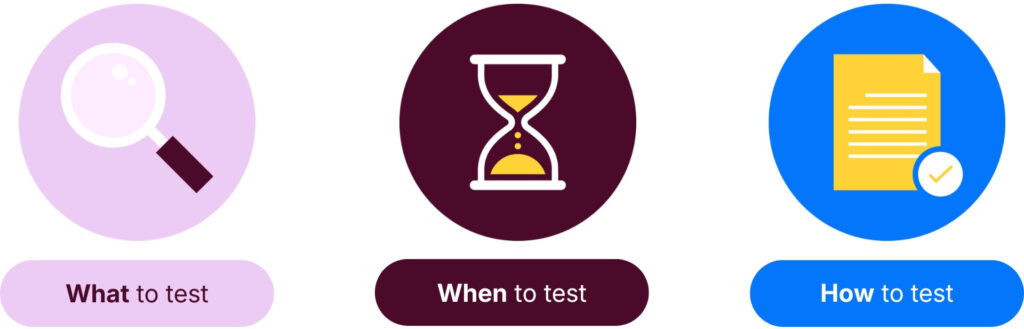

What to test

Testing doesn’t require a finished product. In fact, it’s often better when it isn’t finished yet as that’s when feedback is easiest to act on. You can test:

- Scripts – Does the message actually make sense?

- Storyboards – Does the story land the way you intended?

- Visual direction – Which style do people feel most connected to?

- Tone – Does it sound like something they’d respond to?

- Call to action – Does it actually make people want to do something?

- Narrative framing – Does the overall framing resonate?

And remember: we’re not testing whether people like the assets. We’re testing whether they would change behaviour.

When to test

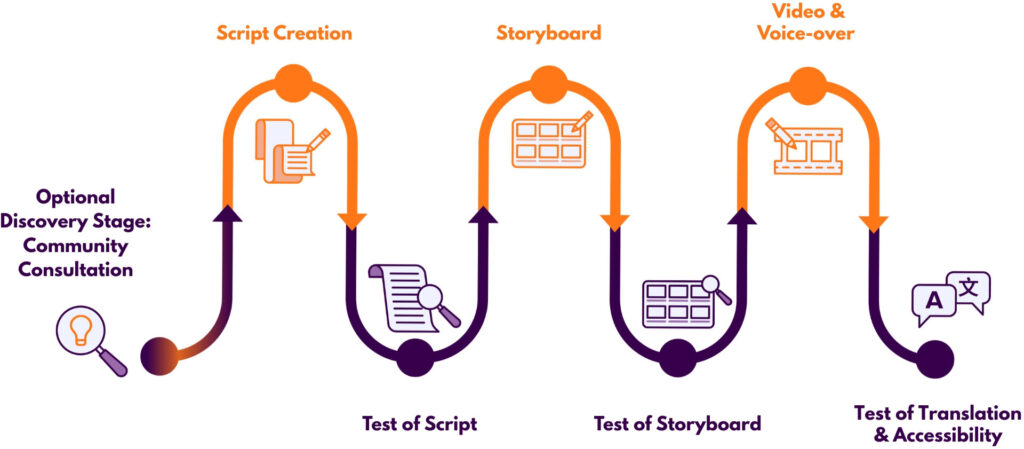

Concept testing can happen at multiple stages of the creative process. The most common approach is a single round of testing at the storyboard phase, just before production is finalised, so there’s still time to refine the concept.

Importantly, testing typically runs in parallel with production. It rarely adds significant time, usually only a few days to recruit participants and synthesise insights. The biggest factor affecting timing is simply access to participants.

How to test

Testing can take a few different forms:

When running sessions, build a discussion guide to keep the conversation structured, but keep it flexible. The goal is to create a space where people feel comfortable sharing honest opinions. Record everything so you can refer back.

If you’re asking people for their time, always compensate them. A gift card goes a long way toward making participation easy and appreciated.

When it comes to participant numbers, you don’t need hundreds. Concept testing isn’t about statistical significance, it’s about directional insight. By around 8–12 participants, you’re usually hearing the same themes repeated. Researchers call this insight saturation. Running two smaller groups rather than one large one also helps reduce the risk of a single strong voice dominating the conversation.

What testing revealed in practice

Butterfly Foundation – Arabic eating disorders campaign

This project aimed to raise awareness of eating disorders within Arabic-speaking communities, challenge cultural stigma, and provide support pathways. The audience wasn’t just individuals struggling, it was also families and healthcare professionals.

Authenticity was everything. Ethnolink conducted real interviews with Arabic-speaking community members to understand lived experiences. Professional actors were used to tell those stories to protect privacy while preserving authenticity.

But what concept testing revealed went far beyond language. Certain scenes like a father and daughter having a direct conversation about eating disorders seemed reasonable on paper. Testing revealed they would feel culturally inaccurate. Those scenes were reshaped.

The campaign resulted in 1,300+ landing page conversions and 96% of surveyed participants reported increased awareness.

Lung Foundation – Vaping, tradies and lung health

Young tradies are a notoriously hard audience to reach. Get the tone wrong and it feels like mockery. Get the visuals wrong and it feels off. Get the language wrong and it gets laughed off.

So the team asked them in two rounds of concept testing that explored tone, visual direction, emotional drivers behind vaping, social pressures, and barriers to quitting.

Here’s what they found:

- Terminology – Technical language about chemical dangers was correct but wasn’t landing. It was simplified and shown visually for better clarity.

- Visuals – The animated vapes didn’t look like the ones tradies actually used. The character had the wrong PPE. These details were fixed.

- Distribution – When asked about a WhatsApp-based Quit service, the tradies said they didn’t use WhatsApp and wouldn’t download it just to access it. But Instagram? Maybe.

That last insight is critical: channel decisions are behavioural decisions. The right message in the wrong place is still a failed campaign.

Due to the concept testing step, the campaign generated over 17,000 clicks through to Quitline services.

Post-launch distribution: don’t forget this step

Concept testing doesn’t just sharpen your creative, it also informs where your content needs to live. During audience conversations, it’s worth asking:

- What channels or platforms do they actually use?

- How do they find out about new things?

- What level of engagement do they currently have with your organisation?

- Is word-of-mouth or community endorsement important to them?

If your audience is already highly engaged, then organic channels may be enough. If not, it’s worth considering partner distribution or paid media.

Either way, the answer should come from the audience, not from internal assumptions about where they spend their time or how they consume their content.

To summarise

The assumptions you’re making about your audience, like their motivation, their channels, their comprehension, their barriers are your biggest budget risk.

When those assumptions go untested, you’re building strategy on guesswork. And guesswork can be expensive.

If assumptions are the risk, then insight is the risk mitigation. You de-risk your communications by asking your audience, testing your creative before going to market, and validating your message, tone, visuals, and call to action before production is locked in.

That’s what moves campaigns from simply being seen to actually shifting behaviour.

Want to work with us?

Punchy Studio is an award-winning visual communications agency working across government, education, NFP, and enterprise. We create meaningful content that shifts behaviour and empowers communities.

To find out more about concept testing, co-design, or starting a video project, get in touch with the Punchy team.